Seeing is Free, Speaking is Not: Uncovering the True Energy Bottleneck in Edge VLM Inference

首次对设备端 VLM 推理进行了系统性的能耗分析,揭示了 autoregressive decoding(而非 visual token 处理)主导了能耗(86–97%),颠覆了将 visual token 缩减作为主要效率优化策略的传统假设。

Mar 27, 2026

Seeing is Free, Speaking is Not: Uncovering the True Energy Bottleneck in Edge VLM Inference

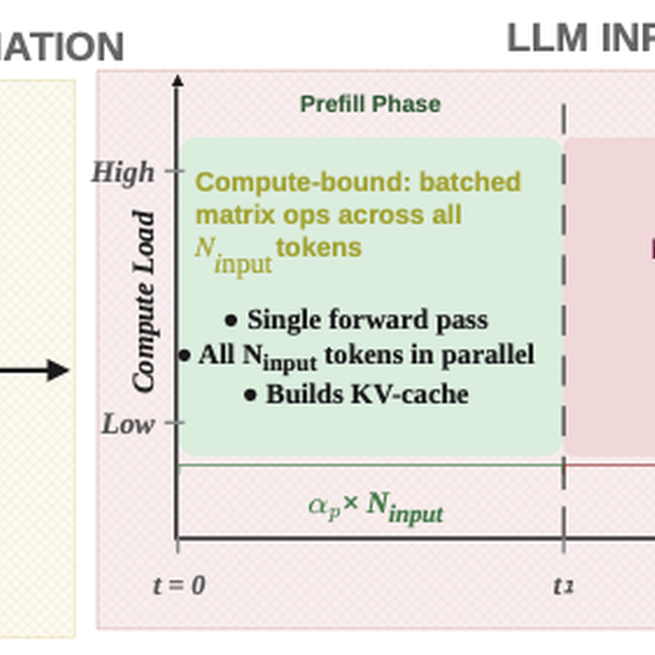

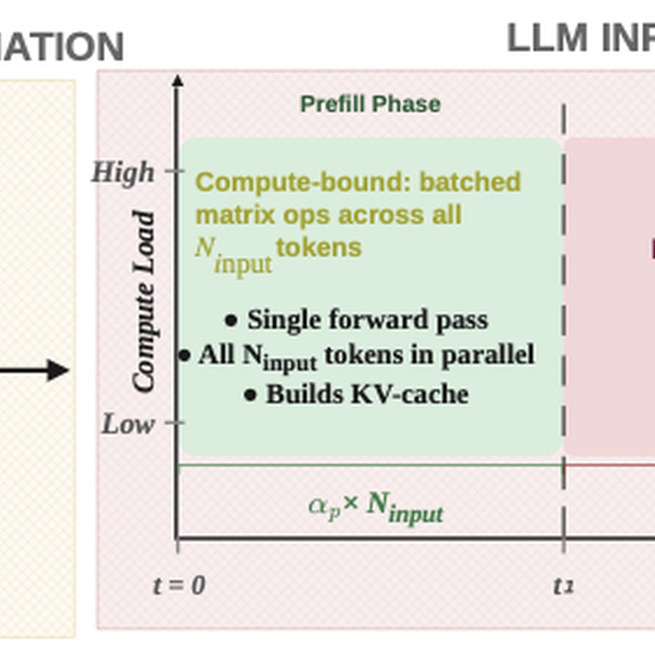

Conducted the first systematic energy profiling of on-device VLM inference, revealing that autoregressive decoding—not visual token processing—dominates energy consumption (86–97%), overturning conventional assumptions about visual token reduction as the primary efficiency strategy.

Mar 27, 2026